Universal Memory for AI Agents.

Persistent context. Real intelligence.Give your agents the ability to learn, remember, and personalise interactions. A secure, local-first memory infrastructure for the next generation of LLM applications.

Specialised Solutions

Generic RAG fails in domain-specific environments. We deploy tailored architectures that understand your unique organisational knowledge.

Music & Creative

Intelligent retrieval for rights management, stem analysis, and creative metadata. Designed for the complexities of modern media production.

Business Solutions

Streamline customer support and internal knowledge management with a personalised RAG layer.

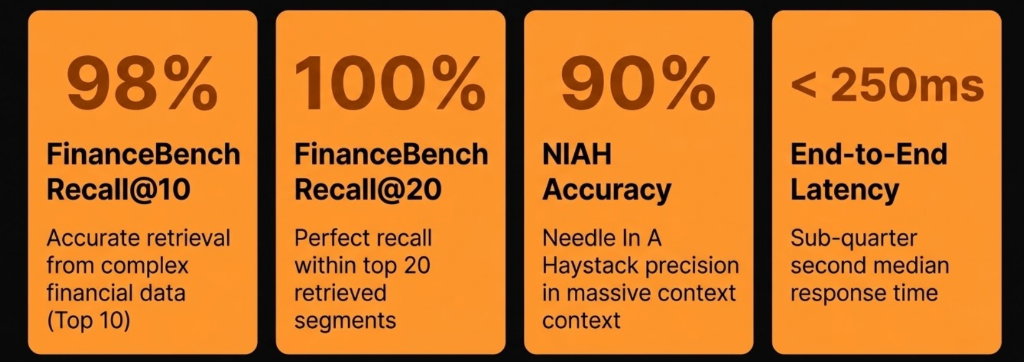

Financial Analysis

High-precision retrieval for market sentiment, regulatory filings, and risk modelling workflows.

Visualise your memory as a graph

Gain deep insights into AI performance. Look inside the brain of your agent to see where it went off track and how it connects complex information.

Traceability

See exactly which memory clusters were activated for any given response.

Performance Audit

Identify weak connections and refine your agent's knowledge base in real-time.

Neural Mapping

Explore the semantic relationships between disparate data points in your organisation.

The Core Comparison

Understanding the fundamental difference between fragmented retrieval and intelligent memory mapping.

"Dumb" Text Retrieval

Most AI apps use standard vector databases. They treat human conversation like a scattered pile of sticky notes.

If a user asks, "What did we discuss about Python?", a standard system just searches for matching keywords and returns fragmented, isolated quotes:

"I like Python."

"Python has pandas."

"FastAPI is great."

Your AI gets the words, but completely loses the context of why they were said.

Relational Memory Mapping

We built a memory engine that mimics human cognition. Instead of scattering sentences, our system automatically groups related conversations into structured knowledge concepts.

When a user asks about Python, our API doesn't just return a quote, it returns the complete, organised context.

Why Developers Choose Us

Self-Organising Context

No manual tagging or complex prompt engineering required. Our memory engine actively analyses the conversational flow in the background.

Topic Evolution & Branching

Human conversations aren't linear; they branch out. When a user switches from talking about "API Security" to "Frontend Frameworks," our system maps that relationship.

Continuous Learning

Our intelligent semantic engine prevents memory bloat. If a user brings up a topic again weeks later, our system knows to gracefully update and merge the existing knowledge.

Full-Picture Retrieval

Never lose the narrative thread.

Technical Architecture

A schematic overview of our retrieval-augmented generation pipeline.

Intent Analysis

Decomposing the query into semantic entities and identifying relevant memory clusters.

Local Retrieval

High-speed vector search within your private infrastructure, ensuring zero data leakage.

Context Synthesis

Blending retrieved facts with agent personality for a grounded, personalised response.

Request Early Access

Universal Memory is currently in private beta. Join the waitlist to help shape the future of integrated AI memory.

We'll keep you updated on development progress and beta availability.

We build AI that remembers you, but we built a database that can't read you.

Most AI companies hoard your chat logs in plain text. We think that's a massive security risk. Instead, we use a Zero-Logging, Encrypted-at-Rest architecture. Your data is processed in milliseconds, encrypted instantly, and then digitally shredded from our active memory.

The AI Gets the Meaning, Not the Words (Vectorization)

When you send a message, our system immediately converts your English sentences into a mathematical map (called a Vector Embedding). To the AI, it looks like [0.015, -0.892, 0.441]. This math represents the meaning of your message, making it impossible to reverse-engineer back into your exact words.

The Digital Shredder (Zero-RAM Logging)

Your actual words are held in our server's temporary memory (RAM) for just a fraction of a second, only long enough to route it to the AI brain. The moment the AI replies, our system's Garbage Collector physically wipes the temporary memory. We don't write your prompts to our logs. If it's not in the logs, it can't be leaked.

The Titanium Vault (AES-256 Encryption)

Before your conversation is saved to your long-term memory profile, it is locked using AES-256 Application-Level Encryption. This means by the time your data hits our database, it is completely scrambled.

Frequently Asked Questions

Everything you need to know about our universal memory infrastructure.

Is my data truly private?

Yes. Our local version of the memory service is designed for privacy-first deployment. Your data never leaves your infrastructure, ensuring complete sovereignty and security.

How does it differ from standard RAG?

Standard RAG is often stateless. Our universal memory provides a persistent context layer that allows you to visualise your AI agent's memory and track/debug performance issues by searching exactly where the AI diverged off track.

Can I integrate this with existing LLMs?

Absolutely. We provide a clean API that works with all major LLM providers and local models, acting as a sophisticated middleware for context management.

What are the performance overheads?

Minimal. Our vector search and retrieval pipeline are optimised for high-speed, low-latency performance, even with massive organisational datasets.

Can your database administrators read my chat history?

No. Even if our lead engineer queries the database directly, all they will see are mathematical arrays and AES-256 ciphertext (gibberish).

What happens if your database gets hacked?

If a bad actor steals our entire database, they get nothing but encrypted blobs and numbers.

Does the AI model provider see my data?

To generate a response, the temporary plaintext is securely transmitted to our AI model provider via TLS encryption. However, they are legally bound by enterprise agreements not to use your data to train their models, and the data is discarded after the generation is complete. Our servers act as a blindfolded middleman.

Transparent, Scale-Ready Pricing

Choose the tier that fits your growth. From individual developers to enterprise-grade security.

Developer

Perfect for testing and individual projects.

- 1,000 Memories

- 500 API calls / month

- Graph memory out of the box

- AES-256 App-Level Encryption

- Standard Auto-Merge

Launch

For startups and developers testing real apps.

- 100,000 Memories

- 10,000 API calls / month

- Everything in Developer

- Version History & Rollback

- Topic Switch Detection

- Email Support

Scale

For growing B2B SaaS processing real traffic.

- 500,000 Memories

- 50,000 API calls / month

- Everything in Launch

- Dedicated Async Workers

- Analytics & Insights

- Export/Import tools

- Priority Support

Enterprise

For corporate clients needing absolute security.

- Unlimited / Custom Volume

- Bring Your Own Key (BYOK)

- Dedicated Neon PostgreSQL

- HIPAA / SOC2 Compliance

- Custom SLA & 24/7 Phone