The logic was simple: turn text into math, find similar math, and feed it to the prompt. But as we move into the era of Agentic Workflows, developers are hitting what we call the "Vector Wall."

The Vector Wall

Standard RAG treats your user's history like a pile of scattered sticky notes. When you query a vector database, it looks for semantic similarity, but it has no concept of relationships, hierarchy, or time.

If a user says, "I'm moving away from React to Vue," and later asks, "What is my preferred frontend stack?", a standard vector search might return both sentences because they both contain "frontend" and "framework." The AI gets a fragmented mess of conflicting data.

The result? Your agent hallucinations, repeats old information, or loses the narrative thread entirely.

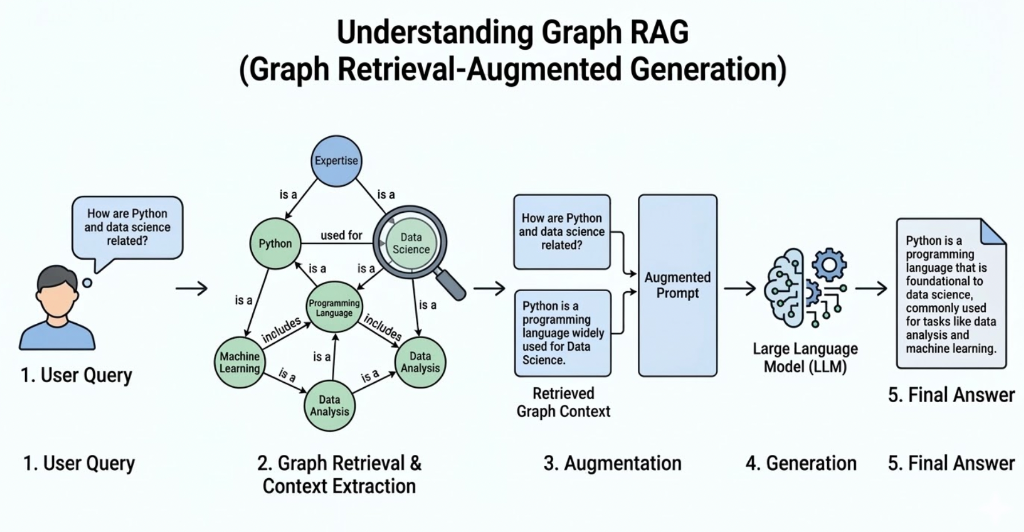

Visualizing the 'Vector Wall': Standard retrieval (right) yields fragmented data snippets. Maple Memory's Cognitive Graph (left) mimics human cognition by mapping semantic relationships between memories.

Introducing the Cognitive Graph

At Maple Memory, we don’t just store vectors; we map Cognitive Architecture. Instead of a flat list, we build a relational graph that mimics human memory. We use a proprietary logic we call Autonomous Memory Routing to manage how information is stored and retrieved.

How it Works: Isolate, Branch, and Merge

To solve the "Vector Wall," our engine evaluates every incoming interaction through three distinct logical paths:

- Isolate (New Context): When a user starts a completely new topic, say, switching from "Quarterly Taxes" to "Gardening Tips", Maple Memory recognises the lack of semantic connection. It Isolates this memory, creating a new "seed node" in the graph so your tax context doesn't pollute your gardening advice.

- Branch (Evolution): Human thoughts aren't linear. If a user discusses "FastAPI" and then moves into "Async Database Drivers," Maple Memory Branches the memory. It maintains the link to FastAPI but acknowledges that the conversation has evolved into a specialised sub-topic.

- Merge (Refinement): This is where we kill "Memory Bloat." If a user clarifies a previous thought or repeats a preference, Maple Memory doesn't create a duplicate. It Merges the new data into the existing node, updating the "Truth" of that memory while preserving the historical link.

The Benchmark: 98% Recall Precision

Because we use Relational Mapping instead of "dumb" text retrieval, Maple Memory achieves a 98% FinanceBench Recall@10 score.

While standard Vector DBs struggle with "Needle In A Haystack" (NIAH) tests as context grows, Maple Memory’s graph structure allows the AI to "zoom in" on the exact cluster of relevant memories, ignoring the noise.

| Feature | Standard Vector RAG | Maple Memory Cognitive Graph |

|---|---|---|

| Logic | Flat Similarity | Relational Hierarchy |

| Context | Fragmented Snippets | Structured Narratives |

| Accuracy | Degrades over time | Improves with interaction |

| Hallucinations | High (conflicting data) | Low (deduplicated truth) |

Stop Storing Data. Start Building Intelligence.

If you are building an agent that needs to actually know its user, to remember that they hated that one specific UI change three weeks ago, or that their project requirements evolved on Tuesday, you need more than a vector.

Maple Memory gives your agents the ability to learn, not just search.